For search engines, their main goal is to get internet users to use them as their primary search provider. The more users they get, the more ad revenue they collect. This means they need to provide the most relevant results possible, presented in a functional and logical manner.

Presenting the results in a functional manner is a something that can be done relatively easily through good web design and development practices. Google, for example, is known for their incredibly simple design. Providing relevant results however, is something that Search Engine companies are always trying to improve.

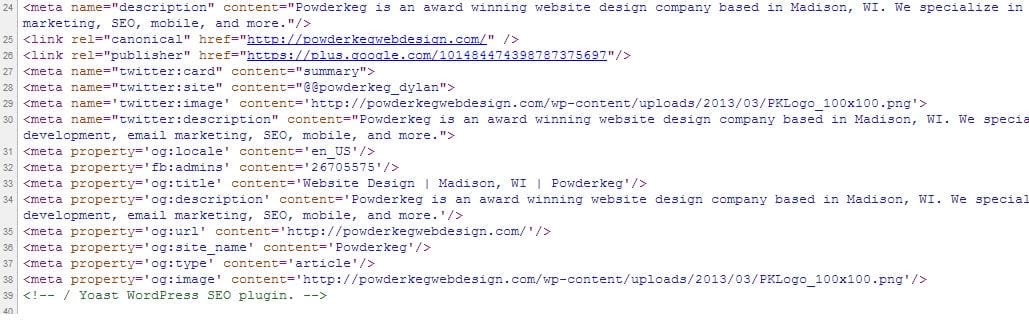

Yahoo, Google, Bing and the many other providers all have secret algorithms that they use to determine how a site should be ranked. They have “bots” or servers that are constantly out visiting websites and looking for key areas that provide insight as to how relevant those pages are. The hope, from a webmaster’s perspective, is that when these bots visit your site they are able to recognize the key topics of your page. Then, the next time a user searches for a topic that is covered on your website, your webpage will be one of the top results.

The tricky part of making sure your website is considered relevant is that these algorithms that the search engines use are secret. It’s hard to always know what aspects of a webpage carry the most weight as far as being ranked. Not only are they not known, but they are also always changing too. The reason for this is two-fold: first, the search engines are trying to update their code to always make their results better; and second, because web developers begin to find out the algorithms and “cheat” the system. When people begin to understand what the search engine bots are looking for, they can begin to develop their web pages to match that criterion.

Creating content that matches search engine algorithms can be done in a good way, often referred to as Search Engine Optimization (SEO), where the content that is on a webpage is genuine, and also created in a such a way that a developer paid attention to how search engines will review it. Or content can be created that tries to “trick” the bot into thinking the content is genuine and relevant to a users search requests. For example, you used to be able to create a webpage that repeated the same keywords over and over again to make the bots think that is what your website was all about. However, the bots have become much smarter and can no longer be tricked that easily.

The advances in both the search engine’s algorithms and web development have resulted in a somewhat successful mesh of increased results relevance and quality content creation. Good web developers are designing sites that are SEO friendly, and then populating the site with quality content to grab the attention of the bots. Websites get developed in ways that display the correct data in the best way possible for search engines to understand what the web page is all about.